Testing of ETL Processes

Data rules the world, and it’s true. Judge for yourself – it is both the goals and results of many processes, it is at the origins of any analytics, forecasts are made based on information, planning is carried out, and achievements and losses are assessed.

From this, we can conclude that the ability to quickly receive, process, and store correct data in the required format and volume, in many cases, is the key to a successful business. Activities related to blockchain technology are no exception, because blockchain networks generate a huge amount of data, and this amount is growing exponentially.

To manage such a flow of information, special technologies and tools are needed. Blockchain etl, as a set of specially selected and combined approaches and tools, greatly facilitates the task of data management, and, as a result, optimizes any blockchain solutions. This article provides insights into the ETL process and the different aspects related to it.

Data Flow in the ETL Process

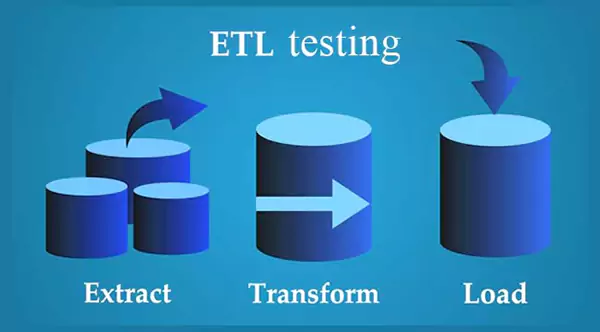

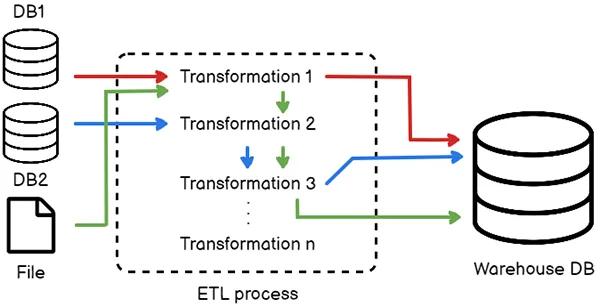

Speaking about ETL as a complex matrix within which various tools for working with data closely interact, we note that it generates the execution of three simple tasks. It must be extracted from some source/sources; information must be converted into a form/format that is useful for other work with it; details must be loaded into a special database/storage to perform the necessary actions with it.

To simplify the formulation, the ETL approach can be represented as a process within which a flow of data moves from source to recipient. The main components of this method are the data source(s), the staging area for information accumulation, and the Data Ware House (DWH). The flow, of course, is not uncontrollable and chaotic; it must obey certain requirements, and these requirements are established by the systems analyst.

If you take a closer look at the first stage of the ETL process, you can see that, in addition to the procedure of downloading data from the source/sources itself, added functions also operate. Other processes make it possible to determine the composition, the frequency of selection, and the rules for picking any specific information necessary for other uses.

The next stage in the transformation is also structured. It includes cleaning the data (if necessary) and bringing it into a single format. The final stage of loading includes updating existing and entering new information into the receiving process system.

Loading data includes two stages: first, it is uploaded to staging, then to DWH. From the storage, it is uploaded to display windows, various reporting forms, files, and others., i.e. delivered to the user by any means.

ETL Testing Tools and Tasks

For the data migration system to function correctly and without failures, it must be periodically tested. To test it, an engineer must have certain tools. The first necessary tool is the well-known file manager Total Commander. Using it, you can search for the necessary entities in sources using a regular expression, and calculate hash sums, indexes, and many other useful values.

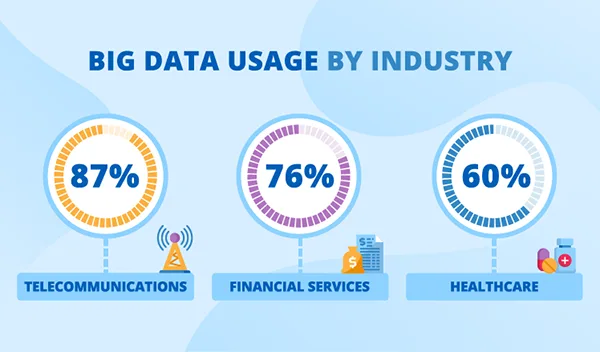

Did you know?This infographic shows the percentage of big data usage by different industries.

Other useful tools are various studios for writing SQL database queries. It should be noted that for the test scenario, it is necessary to use information that is very close to real data, so it is advisable to conduct a preliminary analysis of it. To do this, you can use various analytical BI platforms, Tableau, Notepad, and so on.

Before testing begins, the tester needs to understand where he can get the expected result. The sources of expected results can be so-called test oracles, testing heuristics, and the rest. These tasks, by and large, can be divided into two types: tasks for testing a data slice, i.e. initial loading of a large portion of information, and examination of subsequent increments or loading of the details. The general testing task is:

- checking the movement of information from the source to the target system,

- checking auxiliary tasks for data transformation,

- checking information extraction, and transformation by requirements and expectations,

- checking data uploads to storefronts, files, and others.,

- checking bad files,

- software verification for each integration.

Here’s a Fun Fact-

Around 2.5 quintillion bytes worth of data is generated each day!

Checking Data Loading and Unloading

When checking the data loading stage, the tester must analyze the test object and understand which is correct and which one is “crooked” and should not exist. To do this, the tester needs to use various techniques of static analysis.

At the same time, you can check the loading in the staging area and the target DWH system. When checking the stage of uploading data, for example, to a file, the tester must, at a minimum, check:

- formation of the file name,

- file structure,

- separators, line delimiters of various data types,

- the data itself.

In addition, the tester may be faced with auxiliary verification tasks, such as checking representations and link tables, then, first, one should pay attention to testing the algorithm, determining entry points into each algorithm, dividing sets into subsets, and so forth.

Conclusion

Integration of the ETL process into the business operation gives the organization confidence in the integrity of big data and intelligence gained from it and also helps in reducing risk. In this article, we have gained insights on testing of the ETL process which includes the flow of information in it, tools and testing of tasks, and data loading and unloading for ensuring the quality of data.