Why AI Music Generator Reshapes Creative Starting Points

The major struggle that stands as a barrier in creating a successful music track is the path from converting an idea into a track. That initial push towards assisting the idea feels the most difficult. Gathering the right tools, setting up tunes, and organizing the right timings are more difficult than most people admit.

This is exactly where the right use of an AI Music Generator changes how frictionless and smooth the music creation process becomes. Instead of manually passing layers of composition, production, and recording, it simply completes the process in a single step.

But how does this actually happen? Learn more about why AI music generators reshape creative starting points and provide a musical output.

Key takeaways

- AI music generators direct how music gets an effective start to lead an attractive starting point.

- Instead of defining things manually, one simply needs to share intent, and the rest of the things will be managed by the tools.

- Traditional ways of music generation are still more useful where precision is required.

How Language Translates Into Musical Output

At its core, the system operates through a layered transformation process that turns descriptive language into structured sound. Let’s explore the process in a detailed way:

Mapping Descriptions to Musical Attributes

When a user enters something like “slow emotional piano,” the system interprets:

- “slow” as a lower tempo

- “emotional” as minor key tendencies

- “piano” as a specific timbre

This mapping is not exact, but it follows recognizable patterns.

Constructing Musical Structure Automatically

From these inputs, the system builds:

- chord progressions

- melodic lines

- rhythmic foundations

These elements are assembled based on learned relationships rather than explicit rules.

Rendering a Complete Audio Track

Finally, everything is synthesized into a full piece:

- layered instrumentation

- optional vocals

- finished waveform output

The result feels closer to a finished track than a draft.

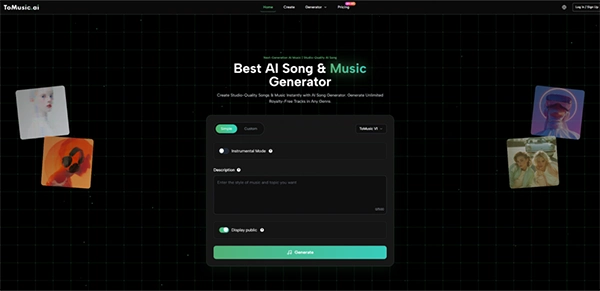

How The Platform is Actually Used in Practice

Effectively using an AI music generator is less about starting from scratch and more about using a guided process to generate music step by step. Explore the three major steps to get an idea of how these platforms are actually used:

Step One: Enter Prompt Or Lyrics Input

Users can either:

- Describe a style or mood

- Input structured lyrics

Both approaches lead to different types of output control.

Step Two: Choose Style and General Preferences

Options typically include:

- genre

- mood

- vocal presence

These guide the generation but do not fully determine it.

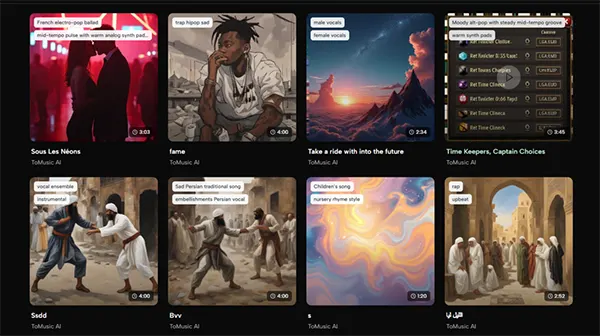

Step Three: Generate and Compare Outputs

The system produces multiple versions. In practice:

- Variation is high

- Selection becomes important

- Iteration improves results

This process feels more like filtering than building.

Where Lyrics Input Changes The Experience

At some point, many users move beyond simple prompts and start using structured text. This is where something closer to a Lyrics to Music AI experience emerges.

Structured Text Creates Narrative Alignment

When lyrics are included:

- The system aligns melody with phrasing

- Sections like verse and chorus become clearer

- Emotional pacing improves

Interpretation Still Plays a Role

Even with lyrics:

- Tone may vary

- Delivery style changes between generations

- Multiple outputs are still necessary

This adds expressive variation but also unpredictability.

Comparison With Traditional Music Production Methods

AI-based workflow and the traditional way need to be used altogether or with the requirement, as they are not replacements for each other. Explore this with the below-mentioned comparison:

| Aspect | Traditional Workflow | AI-Based Workflow |

| Entry Barrier | High | Low |

| Time To Output | Long | Short |

| Creative Control | Precise | Indirect |

| Iteration Cost | High | Low |

| Output Diversity | Limited | Broad |

The comparison highlights a trade-off rather than a replacement.

Where This Approach Works Best

The traditional music creation way might be very time-consuming. Modern AI music generators are one of the most effective tools in situations where time is limited but flexibility and volume is needed:

Fast Content Production Needs

Creators working on:

- Short videos

- Background tracks

- Repeated content formats

benefit from speed and variation.

Idea Exploration And Early Drafting

Instead of imagining outcomes:

- You generate them

- Evaluate quickly

- Adjust direction

This reduces uncertainty early on.

Non-Technical Creative Workflows

People who think in narratives rather than sounds can –

- Express ideas naturally

- Rely on the system for execution

This opens access to music creation.

Limitations That Become Clear Over Time

While the process seems very smooth and seems simple at the first glance, it has certain limitations to be considered. Explore how consistency and control fluctuate with continued use:

Prompt Sensitivity And Variability

Small wording changes can lead to:

- Very different outputs

- Inconsistent tone

This requires careful phrasing.

Limited Fine Control Over Structure

You cannot always define the following:

- Exact chord changes

- Precise arrangement timing

Control exists, but indirectly.

Iteration Remains Necessary

In my experience:

- First outputs are rarely final

- Multiple attempts improve quality

This introduces a different kind of effort.

What This Suggests About Creative Tools

The deeper shift is not about music, but about interfaces. It shares about how manual control is changed with intent-based systems.

In simple terms, we are moving from –

- Control-heavy systems

To:

- Intent-driven systems

Music generation is one example of this broader pattern.

How Creative Roles Are Quietly Changing

The exact role of a creator is broadly reshaping with AI tools. Instead of building everything manually, users are taking advantage of automation; now they simply –

- Define intent

- Evaluate outputs

- Select outcomes

Creativity becomes partially curatorial.

A Practical Way To Understand The Tool

It’s easier to create a perception for this as a bridge between ideas and execution. But in reality, it may be more useful to think of this system as the following:

- A translation layer

- Between language and sound

rather than a replacement for traditional production.

Why This Matters Beyond Immediate Use

The real impact goes beyond music—it reduces the gap between idea and output and changes how people start projects. That alone can influence:

- How often are ideas tested

- How quickly concepts evolve

- How accessible creative work becomes

The tool does not remove complexity. It simply moves it out of the user’s immediate path.

Conclusion

The benefits of an AI music generator are not limited to producing songs in less time. It helps to create timeless music tracks that were previously just planned to be built. It effectively analyzes the idea, understands the input, and then provides a considerable result.

Earlier, creators usually used to get stuck on the first step, that is, the initial stage of idea conversion into music. With the right prompt and proper control and refinement of the provided result, the music can be enhanced further.

Hence, AI music generators effectively shift the focus from manual composing to creative results.