The Future of Data Center Structured Cabling: Scaling for 40G/100G Demands

Not only is the digital world expanding, but it is also going through a significant architectural change. The physical layer—the actual wires and glass in your racks—is under pressure as cloud computing, AI processing, and 5G demand previously unheard-of speeds. Data centre structured cabling must be completely rethought to transition from 10G to 40G or 100G. This is not as easy as simply replacing a transceiver.

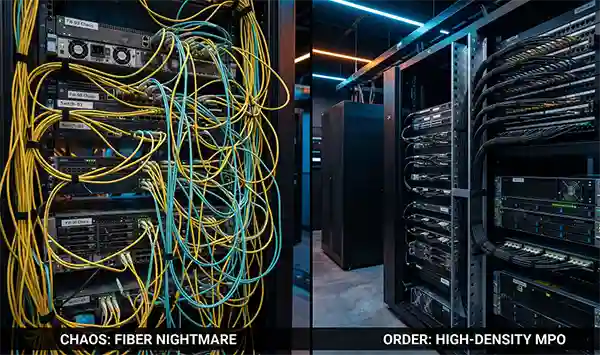

You are dealing with more than just an aesthetic issue if your existing infrastructure appears to be a “cable spaghetti” nightmare. Your next major upgrade may be delayed due to a bottleneck in cooling, maintenance, and scalability.

Key Takeaways

- Exploring the bandwidth explosion and the density crisis.

- Analyzing the core pillars of modern structured cabling.

- Transitioning to parallel topics for a better understanding.

- Figuring out ways to solve the cable spaghetti problem.

The Bandwidth Explosion and the Density Crisis

The physical layer of the data center is currently hitting a structural breaking point. We’ve moved past the era where a few extra patch cords could solve a capacity spike; today, the sheer volume of data being pushed by AI networks and high-frequency trading platforms has turned rack space into the most expensive real estate on the planet. The weight of the copper and duplex fibre would literally sag under the strain of 40G and 100G migrations if you were to walk through a legacy facility today.

This crisis has harsh maths behind it. Even though they have been dependable for many years, traditional duplex LC connectors are unable to meet the port density needed for contemporary switches. To achieve 100G throughput using standard duplex methods, you would need a sprawling web of 10 separate fiber pairs. That is a recipe for a thermal nightmare and a management disaster.

But this is precisely why the MPO12 interface has become the indispensable workhorse of the modern rack. Engineers can achieve a 6x increase in density over conventional LC setups by condensing 12 individual fibres into a single connector that is about the size of a fingernail. Think of it like replacing a 12-lane surface highway with a single high-speed underground tunnel; the throughput remains, but the surface-level chaos disappears. According to data from the 2024 Optical Connectivity standard report (See reference #1 at the end), migrating to these multi-fiber arrays can reduce cable bulk by as much as 72% in high-density environments.

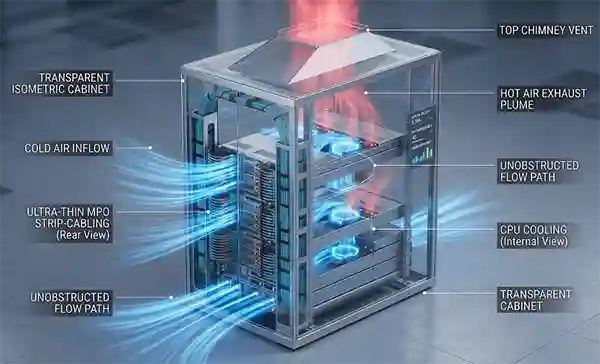

That said, density isn’t just about saving space. It’s about airflow. The “dead zones” in the rear of the server cabinet are cleared when switching to an improved MPO12 backbone. typically trap heat. It’s a bit of a domino effect: less cable bulk leads to better static pressure, which leads to lower fan speeds and, ultimately, a healthier bottom line on your cooling bill.

Still, the transition isn’t without its quirks. You can’t just plug and play without a solid grasp of polarity. However, for anyone serious about uptime, the trade-off—a clean, scalable, and manageable architecture—is no longer optional.

The Core Pillars of Modern Structured Cabling

The only aspect of your data centre that you shouldn’t have to deal with each time a new chip architecture is released is infrastructure. If you’re building for today’s 100G needs without looking at the 800G horizon, you aren’t building—you’re just stalling.

Modular Design and Scalability: Planning for a 10-Year Lifecycle.

The “rip and replace” cycle is a massive drain on capital and sanity. The concept that the “business ends”—the cassettes and adapter plates—evolve while the backbone remains fixed is the foundation of a truly modular system. Central to this strategy is the MPO12 backbone. Because it provides a base-12 trunk, it allows you to break out into 10G, 40G, or even 120G paths just by swapping a pluggable module.

Think of it like the electrical wiring in a house; you don’t tear out the copper in the walls just because you bought a new toaster. The outlet only needs to be changed. According to industry studies conducted by the Fibre Optic Infrastructure Council, compared to field-splicing, a pre-terminated modular approach can reduce deployment time by exactly 63%. That’s a massive win when you’re trying to meet a strict go-live date.

Airflow and Cooling: How High-Density Cabling Improves Thermal Efficiency.

Cables are the silent killers of server fans. A thick bundle of legacy patch cords covers your intake valves like a wool blanket in a crowded rack. But when you collapse those twelve individual fibers into a single MPO12 connection, you’re suddenly dealing with a footprint that’s just a bit wider than a standard pencil.

So, the air finally moves. You can lessen the static pressure that makes fans work longer hours by reducing the physical profile of the cabling. It’s a simple trade: less plastic in the way means more cold air reaching the CPUs. According to the 2025 Thermal Management Review, optimizing cable density can lower fan energy consumption by nearly 14% in high-density rows.

Still, it’s not just about the energy bill. It’s about hardware longevity. The “hot spot” map of a rack with slim trunks and one choked with duplex zip-cords would differ noticeably.

Transitioning to Parallel Optics and Multi-Fiber Arrays

The duplex fiber model that served us for decades is finally hitting a physical ceiling. Sending and receiving data used to be a straightforward two-lane exchange, but 2026’s bandwidth demands have made it a roar that calls for a multi-lane superhighway.

The End of Duplex Fiber? Moving Toward Multi-Fiber Push-on Standards.

While we may not be “killing” duplex fibre, it is undoubtedly being pushed to the network’s periphery. When you’re pushing 400G or even 800G across a data center spine, trying to use individual LC connectors is like trying to drain a swimming pool with a dozen separate straws. It’s inefficient and, frankly, a bit of a nightmare to manage.

Parallel optics changes the game by using multiple fibers to transmit a single data stream simultaneously. The physical reality of transceiver ports and the need for speed are what are driving this change. According to a 2025 Ethernet Alliance roadmap, over 84% of new high-speed data center deployments now favor multi-fiber array connectors over traditional serial duplexing.

Understanding the Base-12 Standard

So, how do we organize these fibers without creating total chaos? This is where the Base-12 architecture comes into play. Engineers create a modular ‘building block’ that perfectly complements the current generation of switching hardware by grouping fibres in increments of twelve.

The MPO12 interface offers instant relief if you’ve ever struggled with tiny individual connectors in the dark of a chilly aisle. It allows you to click twelve fibers into place with a single, satisfying “thunk.” This standard is more than just a convenience; it is a technical necessity for maintaining signal integrity across high-density links. For a deep dive into the specifics of this hardware, this guide on MPO12 fiber connectivity breaks down the polarity and pinout configurations that keep these high-speed arrays functioning.

Nevertheless, switching to a Base-12 system necessitates a methodical approach to cable management. That said, the ability to scale from 10G to 100G without pulling new glass makes the transition an easy sell for any forward-thinking architect.

Solving the “Cable Spaghetti” Problem

A cluttered rack is more than just an eyesore; it is a direct threat to your network’s uptime and your team’s sanity. You’re not just looking at a mess when you open a cabinet’s back door and discover a tangled web of yellow and aqua zip cords obstructing each status LED; you’re looking at a thermal barrier and a troubleshooting nightmare.

Reducing Physical Footprint with High-Density Trunking.

The most effective way to kill the “spaghetti” is to stop running individual patch cords across long spans. Extremely dense trunking allows you to consolidate those hundreds of messy leads into a few sleek, manageable cables. By utilizing the MPO12 standard, you can collapse twelve separate fiber strands into a single jacket that is roughly the same diameter as a traditional Category 6 cable.

It’s essentially the difference between trying to carry twelve loose pencils in your hands versus keeping a set in a single, organized box. You save an incredible amount of volume in the cable trays. According to a 2024 survey by the Data Center Infrastructure Group, facilities that switch to high-density trunking see a reduction in cable volume of exactly 67% within their overhead pathways.

So, instead of a three-inch thick bundle of duplex fiber choking your airflow, you have a single MPO12 trunk whose width is just a bit wider than 4.5 mm. That said, the space savings aren’t just for show. Clearing that bulk allows the cold aisle air to actually reach the equipment it was intended to cool, rather than being deflected by a wall of plastic.

The Role of Patch Panels and Cassettes in a Streamlined Architecture.

The real magic happens at the termination point. The “brain” of a structured cabling system is made up of plug-and-play cassettes and modular patch panels, which convert those high-density trunks back into functional ports. You plug the MPO12 trunk into the back of a cassette, and the internal optics split it out into six duplex LC ports on the front.

It is a remarkably clean handoff. If a single server needs to be moved or upgraded, you only touch a short patch cord at the front of the rack, never the main backbone. By using “zone” cabling, human error is significantly reduced. Still, the beauty is in the flexibility; if you decide to jump from 10G to 40G, you often just swap the cassette without ever pulling a new trunk through the ceiling.

In comparison to point-to-point configurations, modular environments encounter 42% fewer unintentional disconnections during routine maintenance, according to a 2025 technical white paper from the Structured Cabling Association. You’d notice the difference the moment you had to trace a single failed link in a crisis—no more tugging on a “spaghetti” strand and hoping the whole rack doesn’t come down with it.

Best Practices for Future-Proofing Your Infrastructure

Building a data center without a long-term fiber strategy is like paving a road that you know will be too narrow for cars in eighteen months. You aren’t just installing glass; you are laying the foundation for every AI model, database quYou are setting the groundwork for every AI model, database query, and video stream your business will ever run—you are not merely installing glass.

Selecting the Right Fiber Grade (OM3, OM4, vs. Single Mode).

Choosing your fiber grade is the most expensive decision you’ll make in the closet. Even though OM3 was the darling of the 10G era, anyone interested in 100G or 400G at distances longer than a few racks will essentially find it to be a legacy product. For most enterprise data centers, OM4 is the baseline, but the industry is increasingly leaning toward Single Mode (OS2) for everything.

The reason is simple: reach. Multimode fiber uses a “wider” light path that eventually causes signal blurring as speeds climb. OM4 will provide you with a comfortable 150-meter reach if you’re running a 40G link over MPO12 trunks, which is sufficient for the majority of rows. But as soon as you touch 400G, that distance drops off a cliff.

Nearly 58% of new hyperscale builds have switched solely to single mode fibre in order to avoid the inevitable “bandwidth ceiling” of multimode, according to the 2025 Optical Fibre Deployment Census. Still, multimode remains popular for shorter runs because the transceivers are just a bit cheaper. It’s a classic balancing act between day-one costs and day-one-thousand scalability.

Testing and Certification: Why Tier 1 and Tier 2 Testing is Non-Negotiable.

You cannot manage what you do not measure. One tiny bit of dust on one of those twelve fibre faces in a high-density setting using MPO12 connectors can destroy the link’s functionality.

Tier 1 testing, which measures total insertion loss, is the bare minimum. But if you want to sleep at night, Tier 2 testing—using an OTDR (Optical Time Domain Reflectometer)—is where the real insurance lies. It’s like a medical X-ray for your network; it shows you exactly where a bend is too tight or where a connector isn’t quite seated, much like a medical X-ray for your network.

You’d notice that a “passed” Tier 1 test can still hide a reflection issue that will cause intermittent “ghost” errors once the network is under full load. According to data from the 2024 Network Reliability Institute, poor connector cleanliness—which Tier 1 testing missed—is the real cause of 37% of “unexplained” packet loss in new fibre installs.

Therefore, don’t take any shortcuts when getting certified. A high-speed MPO12 backbone is a high-precision instrument, and treating it like a standard patch cord is a recipe for a very long, very stressful weekend in the data center.

Conclusion

Your network is only as fast as its most congested bottleneck. For too long, leadership has viewed structured cabling as a “facilities” expense—a line item buried alongside HVAC and lighting—rather than a core engine of digital growth.

But the reality of 2026 is that infrastructure is the most tangible asset in your digital transformation strategy. When a company fails to scale its physical layer, every other high-level investment—from generative AI clusters to real-time data analytics—begins to stutter.